Can be used to monitor for throughput dropping under the expected threshold.Number of messages popped out of the queue for processing, but not necessarily deleted. If messages are getting stale, this could cause other application issues or degradation to the user experience (UX) if the messages relate to a customer-facing application. Note: In the ideal world, messages should be processed in a timely fashion, relative to expectations. Stale messages in the queue might indicate that the worker: Has a downstream dependency which is unavailable causing processing failuresĪpproximate number of seconds the oldest message in the queue has been waiting for processing.Is experiencing a large influx of incoming messages.Isn’t consuming messages fast enough and might need to scale horizontally (autoscaling).Messages become visible again after the receive timeout expires or is programmatically expired.Īn excessive queue length might indicate that the worker:

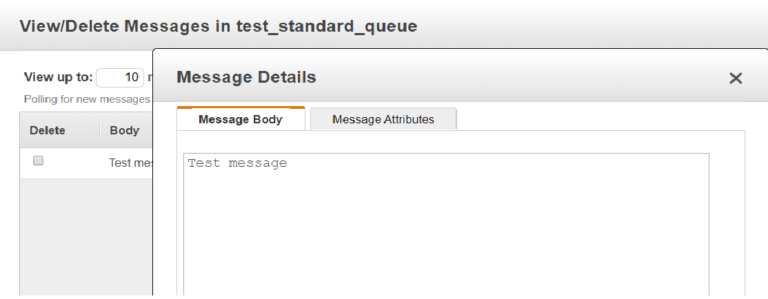

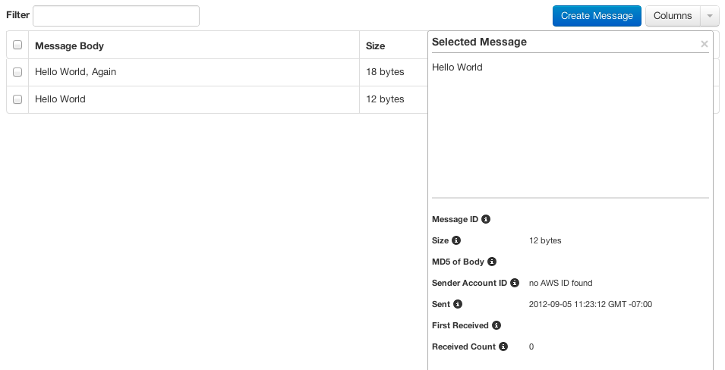

Number of messages which have not yet been locked by a worker. In this scenario, one might want to configure monitoring and alerting on CloudWatch metrics in the SQS namespace such as: ApproximateNumberOfMessagesVisible Let’s say you have a microservice that processes messages from an SQS queue. Here’s an example that illustrates how we can use the SQS CloudWatch metrics namespace to tell a tale about our application performance: If you’re not sure about what they mean, read into the AWS documentation and try to understand what type of value for a given metric would indicate your application isn’t behaving as expected. Our job as cloud engineers is to consider that the metrics of the service we’re leveraging tells a story. AWS provides a plethora of CloudWatch metrics for each service they offer which can serve as a foundation for monitoring your AWS dependencies. All of the potential ways applications can misbehave is interesting, which is why I think I enjoy programming and systems so much! CloudWatch Metricsīeyond the basics of systems monitoring, if your application is leveraging AWS services to fulfill its purpose, those resources should be monitored adequately as well. Sometimes, a spike could indicate a local system bottleneck, such as disk I/O, or a downstream dependent system which suddenly causes things to back up in memory. In my experience, when digging through application logs while investigating spikes, we often uncover unexpected or untested permutations of payload sizes, request types, or different code paths which make our application act on the fritz. By monitoring our core metrics, when we become aware of abnormalities in the service’s behavior, we’re given the opportunity to trace through what was happening in our application during that time. We want to understand how much memory or cpu an application uses normally, but also how much it uses when the application is under heavy load. For starters, leveraging the average and maximum of these statistics is a good route to go. The statistics you choose to leverage when building monitoring dashboards and alerting is important in order to get a comprehensive overview of how an application behaves. Statistics to Use for Monitoring your Applications I’ll also be going into how an APM and custom application metrics can fit into your monitoring system, and how it helps save you time investigating issues, thus improving your incident resolution times. In this article, I’m going to share with you a practical queue worker monitoring example which should help get you thinking about how to monitor your application. There are also some simple cron-based scripts out there that you can deploy using user data scripts which will post memory metrics to CloudWatch for you. If you’re not running in Docker/ ECS, gaining access to memory metrics for an application running on EC2 instances is a little bit more work, but with a solid monitoring agent you should be able to get memory utilization published to your monitoring platform in no time. CloudWatch is the service that provides these metrics to you, and underpins much of the alerting and autoscaling magic that’s available to you in AWS. If you’re running your application services in Amazon ECS, AWS provides the foundational Memory and CPU utilization metrics you should target for monitoring right from the gate. There are a plethora of different tools at your disposal in the AWS ecosystem to configure application monitoring and alerting. The best time to start thinking about monitoring your applications is before it goes to production. Building out a sufficient monitoring suite is critical to measuring the performance and availability of your services.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed